Part 1: http://www.dailydoseofexcel.com/archives/2010/02/26/powerpivot-part-1-of-4/

Since Excel 2007 (and later) can handle a million rows of data, I figured it made sense to explore PowerPivot (PP) with a data set larger than that. In addition, I have had a long time curiosity about the US census data. So, this seemed an appropriate time to combine the two. After some exploration of www.census.gov, I learned of http://usa.ipums.org/usa/index.shtml This is a project of the Minnesota Population Center of the University of Minnesota.

Do note that this post is not meant to be a step-by-step tutorial on how to use the IPUMS website. While it took some time, it took even more effort to understand the census data but an explanation of the data is also not my intent with this post. The focus is on loading a large data set in PowerPivot.

The final data set I downloaded expanded to nearly 18 million rows of data. But, it took a while before I got to the point of getting that data set prepped for PP.

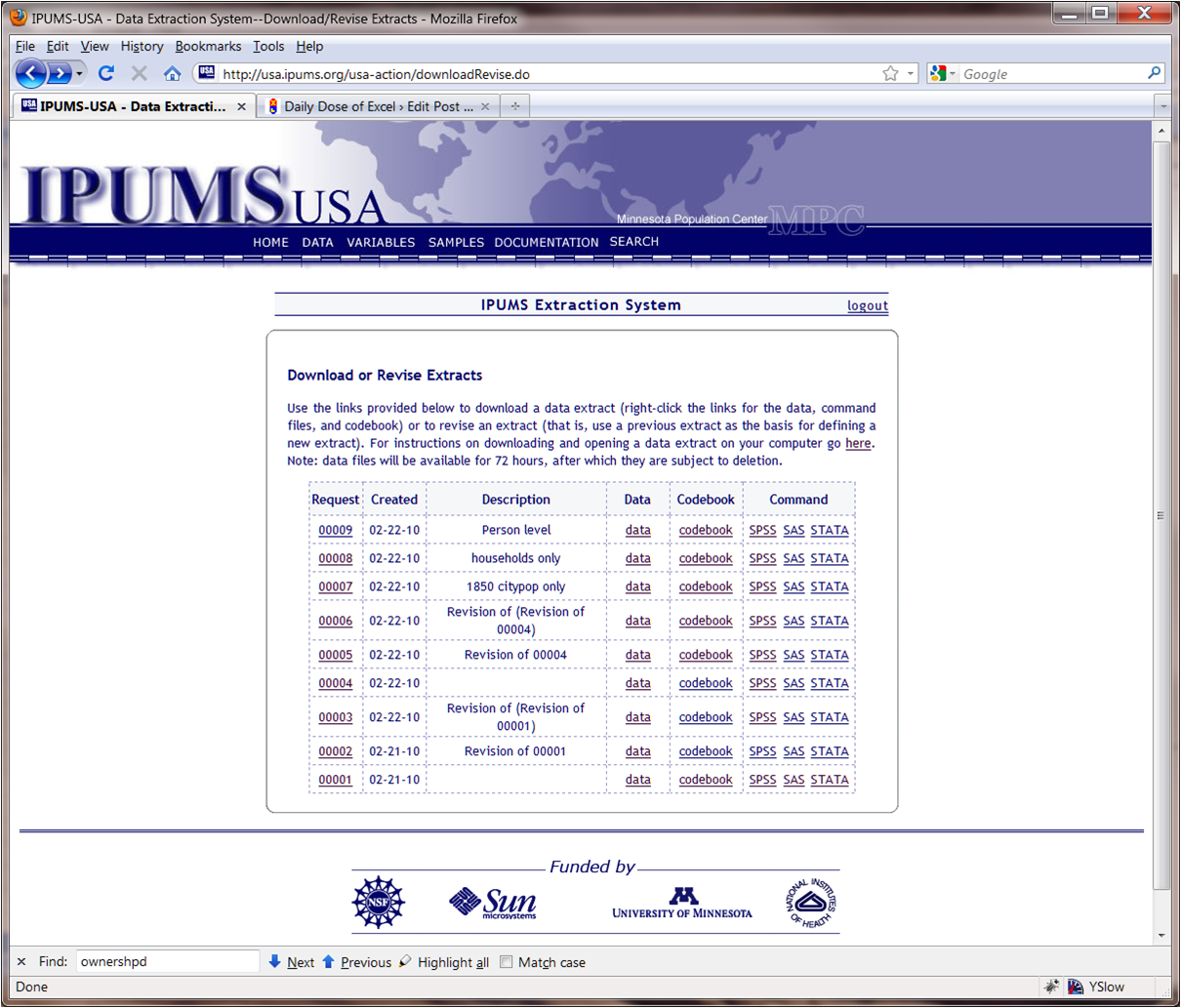

A relatively superficial exploration of the IPUMS site led to two conclusions. One, the result of ‘analyze data online’ would yield results online but the result sets were intentionally limited in size. Two, trying to get detailed data through the ‘Create an Extract’ or the ‘Download or Revise Extracts’ led to large data sets but of the kind that were intended for use with SPSS, SAS, or STATA. The data set itself consisted of fixed length columns. In addition, there was a delimiter at the end of each row record (discovered through trial and error).

In addition to the data set, the website provided the command sets to load the data into SPSS, SAS, and STATA.

The SPSS instructions were fairly helpful in understanding the layout and the content of the data (other than the secret delimiter).

So, for one data set the columns were

datanum 5-6

serial 7-14

numprec 15-16

hhwt 17-20

statefip 21-22

county 23-26

urban 27

city 28-31

citypop 32-36

urbpop 37-41

ownershpd 42-43

mortotal 44-47

mortamt1 48-52

rentgrs 53-56

hhincome 57-63

pernum 64-67

perwt 68-71

age 72-74

sex 75

marst 76

raced 77-79

gradeattd 80-81

occ1950 82-84

ind1950 85-87

inctot 88-94

ftotinc 95-101

In addition, the SPSS instructions also contained information about the text values associated with the numeric codes in the data set. For example, ownershpd values were:

00 “N/A”

10 “Owned or being bought”

11 “Check mark (owns?)”

12 “Owned free and clear”

13 “Owned with mortgage or loan”

20 “Rented”

21 “No cash rent”

22 “With cash rent”

The above information is important since we can create Excel tables of these number-to-text maps and link them to the data table in PP.

What made the process of getting the data in PP challenging was that PP will not read fixed column width data from a text file though it will read comma delimited text files. I would have thought this would be elementary but I couldn’t find any way to get PP to do so. Maybe, I missed something that would have made the rest of the exercise below unnecessary.

So, as far as I could tell, I had to convert the fixed-column-width text file into a file where the columns were delimited by some character. I picked the comma. But, I couldn’t use Excel (too many records) or Word (too large a file) to do the conversion. I also tried Notepad and WordPad before falling back on true and tried VB(A).

I did not start with the 18 million record data set. I used the smaller data set for a single census from the 19th century to explore the structure of the data. Through trial and error I discovered the secret delimiter at the end of each record. I also discovered that the software did not summarize the data in any way. The code that I felt confident I could use for data from all the censuses combined is below.

It’s not elegant. It’s not flexible. It’s not meant to be either. At some point I might be tempted to paste the information about the column names and widths into a Excel worksheet and have the code automagically work off that. But, for now, the goal was to prep the data for PP.

One interesting thing I did learn was that using FreeFile() twice in succession yields the same integer value. That, of course, leads to an error when opening the 2nd file. So, the correct approach is to get a free file number, open that file, and then get a second free file number for another file.

Also, if one were to add up the column widths of the various fields one would get 101 but the record being read has 102 characters. The last byte is the delimiter that I want to drop.

Sub writeOneRec(OutF As Long, Rslt As String)

Dim Yr As Integer, DataNum As Integer, Serial As Long, _

NumPrec As Integer, HHwt As Integer, _

StateFIP As Integer, County As Integer, Urban As Integer, _

City As Integer, CityPop As Long, _

UrbPop As Integer, _

OwnerShip As Integer, MorgTotal As Integer, _

MorgAmt1 As Integer, RentGross As Integer, _

HHIncome As Long, _

PerNum As Long, PerWt As Integer, Age As Integer, _

Sex As Integer, MarSt As Integer, Race As Integer, _

GradeAtt As Integer, Occ1950 As Integer, Ind1950 As Integer, _

IncTot As Long, FamTotInc As Long

On Error Resume Next

Yr = Left(Rslt, 4)

DataNum = Mid(Rslt, 5, 2)

Serial = Mid(Rslt, 7, 8)

NumPrec = Mid(Rslt, 15, 2)

HHwt = Mid(Rslt, 17, 4)

StateFIP = Mid(Rslt, 21, 2)

County = Mid(Rslt, 23, 4)

Urban = Mid(Rslt, 27, 1)

City = Mid(Rslt, 28, 4)

CityPop = Mid(Rslt, 32, 5)

UrbPop = Mid(Rslt, 37, 5)

OwnerShip = Mid(Rslt, 42, 2)

MorgTotal = Mid(Rslt, 44, 4)

MorgAmt1 = Mid(Rslt, 48, 5)

RentGross = Mid(Rslt, 53, 4)

HHIncome = Mid(Rslt, 57, 7)

PerNum = Mid(Rslt, 64, 4)

PerWt = Mid(Rslt, 68, 4)

Age = Mid(Rslt, 72, 3)

Sex = Mid(Rslt, 75, 1)

MarSt = Mid(Rslt, 76, 1)

Race = Mid(Rslt, 77, 3)

GradeAtt = Mid(Rslt, 80, 2)

Occ1950 = Mid(Rslt, 82, 3)

Ind1950 = Mid(Rslt, 85, 3)

IncTot = Mid(Rslt, 88, 7)

FamTotInc = Mid(Rslt, 95, 7)

On Error GoTo 0

Write #OutF, Yr, DataNum, Serial, NumPrec, HHwt, StateFIP, _

County, Urban, City, CityPop, UrbPop, OwnerShip, _

MorgTotal, MorgAmt1, RentGross, HHIncome, _

PerNum, PerWt, Age, Sex, MarSt, Race, _

GradeAtt, Occ1950, Ind1950, IncTot, FamTotInc

End Sub

Sub openDB9()

Dim X, Rslt As String, OutF As Long

X = FreeFile()

Open “c: usharworkusa_00009.datusa_00009.dat” _

For Binary As #X Len = 102

OutF = FreeFile()

Open “c: usharworkusa_00009.txt” _

For Output As #OutF

Write #OutF, “Yr”, “DataNum”, “Serial”, _

“NumPrec”, “HHwt”, _

“StateFIP”, “County”, “Urban”, _

“City”, “CityPop”, _

“UrbPop”, _

“OwnerShip”, “MorgTotal”, _

“MorgAmt1”, “RentGross”, _

“HHIncome”, _

“PerNum”, “PerWt”, “Age”, _

“Sex”, “MarSt”, “Race”, _

“GradeAtt”, “Occ1950”, “Ind1950”, _

“IncTot”, “FamTotInc”

Rslt = String(102, ” “)

Get #X, , Rslt

Do While Not EOF(X)

writeOneRec OutF, Rslt

Get #X, , Rslt

Loop

‘Stop

Close #X: Close #OutF

End Sub

And, so now, I have a CSV data set ready to load into PowerPivot.

Thanks great stuff.

Try UltraEdit or any other good text editor. UltraEdit will open large files and has column editing.

Maybe, just maybe, there’s a reason real statisticians use real stats software to do real statistical analysis. Something like using the right tool for the task.

Even if you want to use Excel for some part of the analysis, stats software would be much handier for converting data file formats. Like the GNU Project’s PSPP which handles SPSS files. Very flexible, very fast, no pointless reinventing the wheel.